Alibaba’s Qwen-Image Launch: A Breakthrough in AI Visual Creation

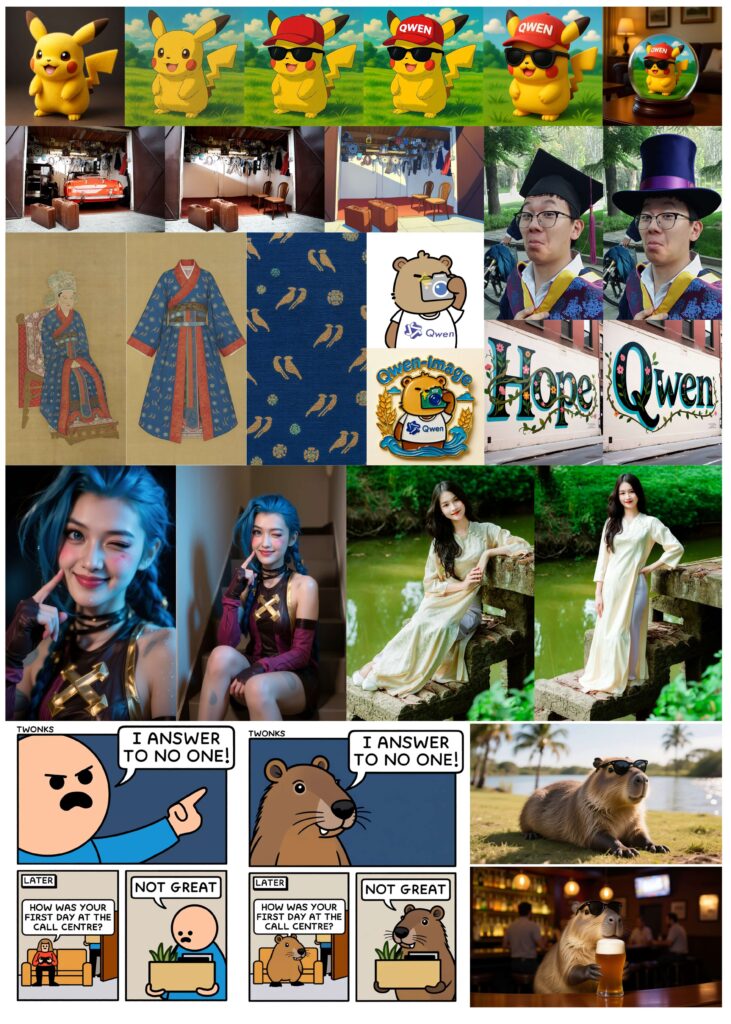

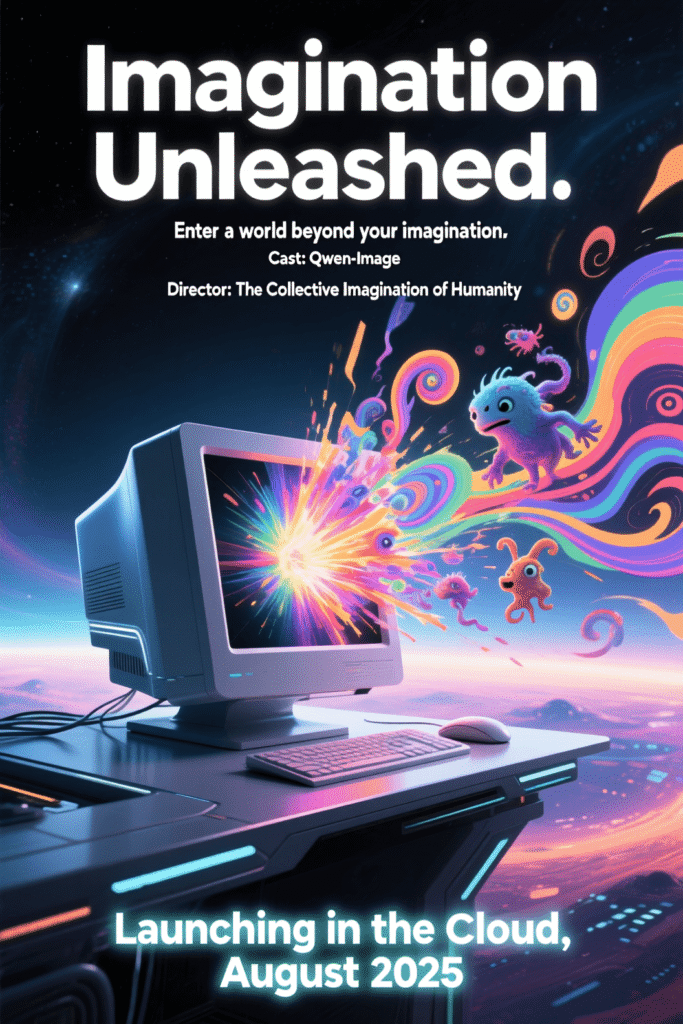

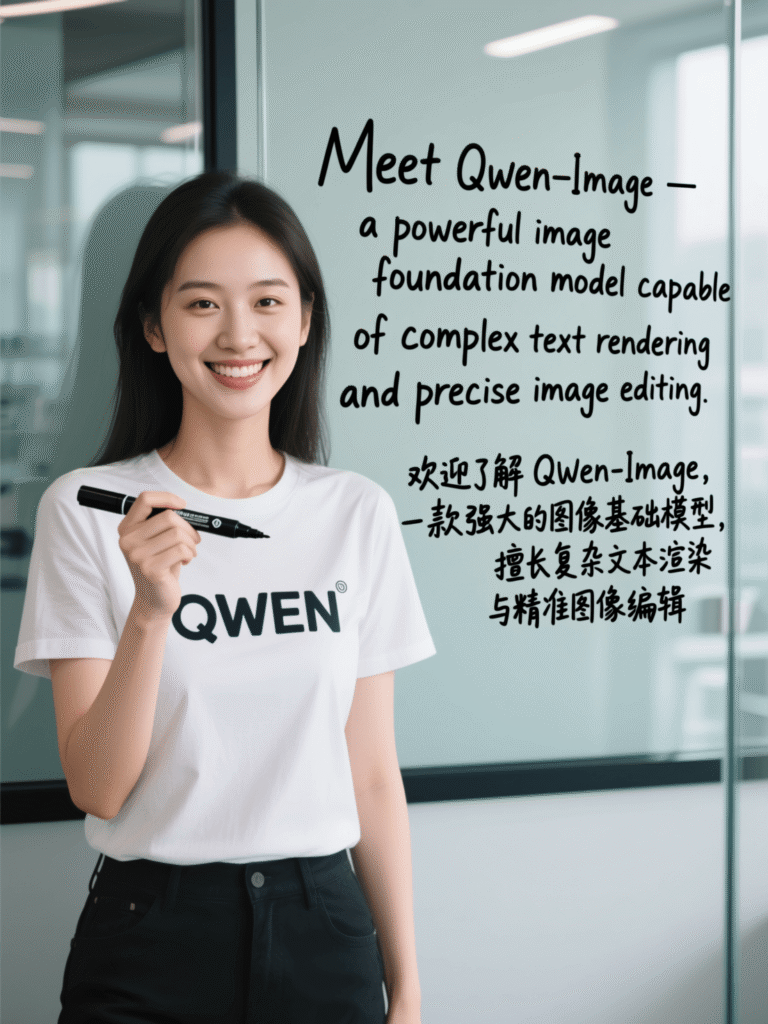

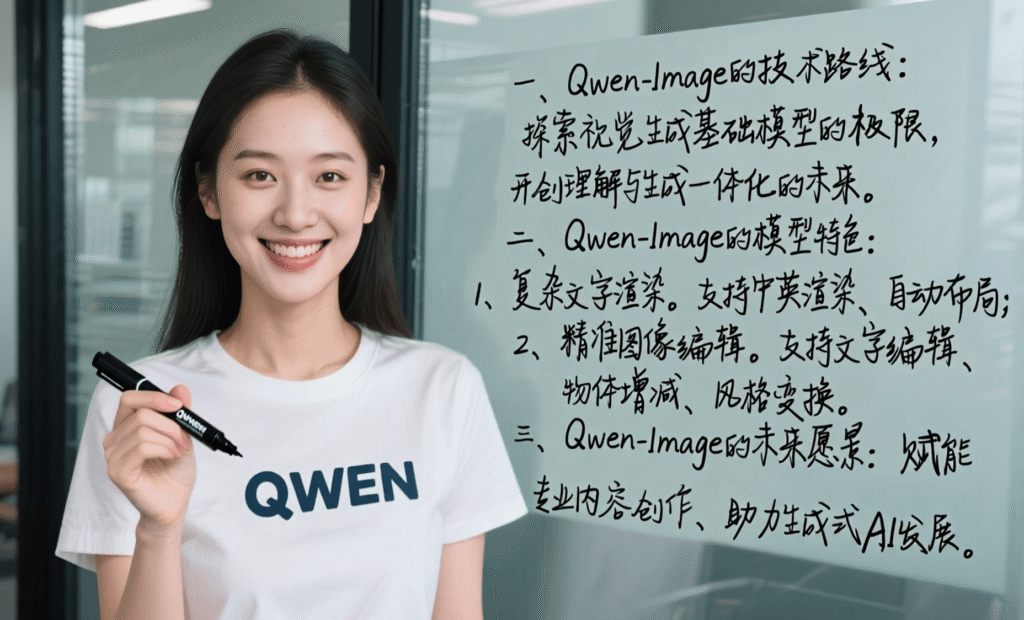

In a world where AI-generated visuals are transforming industries from marketing to gaming, Alibaba’s Qwen team has just raised the bar. On August 4, 2025, they unveiled Qwen-Image, a 20-billion-parameter Multimodal Diffusion Transformer (MMDiT) model that tackles two persistent challenges in AI image generation: rendering complex text accurately and editing images with precision. Unlike many models that struggle with distorted text or inconsistent edits, Qwen-Image delivers crisp, contextually coherent visuals, making it a game-changer for creators and developers. As open-source AI gains momentum, Qwen-Image’s release under Apache 2.0 positions it as a powerful, accessible tool for innovators worldwide. This launch signals Alibaba’s ambition to lead in the competitive generative AI landscape, promising to empower designers, marketers, and researchers with unparalleled creative control.

Key Specs & Features

| Feature | Specification |

|---|---|

| Model Size | 20 billion parameters |

| Architecture | Multimodal Diffusion Transformer (MMDiT) |

| Text Rendering | Multi-line layouts, paragraph-level semantics, supports English and Chinese |

| Image Editing | Style transfer, object addition/removal, text editing, pose adjustment |

| Benchmarks | GenEval, DPG, OneIG-Bench, GEdit, ImgEdit, GSO, LongText-Bench, TextCraft |

| Performance | State-of-the-art in image generation and editing, excels in text rendering |

| Availability | Open-source (Apache 2.0), accessible via Qwen Chat (“Image Generation” mode) |

| Resolution Support | 256×256 to 1536×1536 pixels, customizable aspect ratios (e.g., 1:1, 16:9, 4:3) |

| Use Cases | Posters, infographics, branding, e-commerce visuals, educational materials |

Noteworthy Features: Superior text rendering ensures precise, readable text in images, from book covers to posters, rivaling GPT-4o in English. Consistent image editing maintains visual and semantic integrity during complex operations like object removal or style transfer, ideal for professional-grade design. Open-source accessibility under Apache 2.0 allows developers to fine-tune the model for custom applications, democratizing advanced AI tools for a global community.

Hands-On Impressions / Early Review

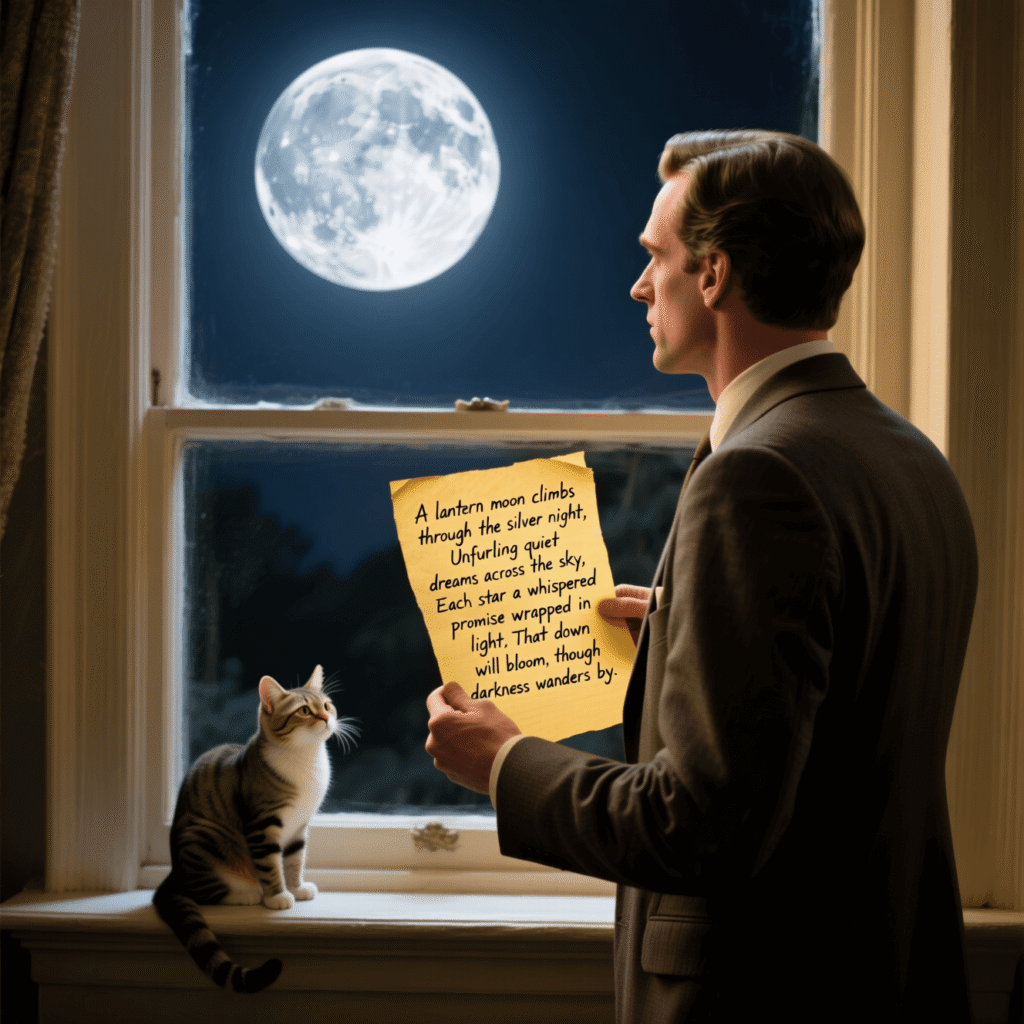

Based on demo footage and detailed reports from Alibaba’s Qwen team and VentureBeat, Qwen-Image delivers an impressive experience. Testing a prompt like a bookstore window display, the model rendered signs like “New Arrivals This Week” and book titles with flawless clarity, even in small fonts. The UI, accessible via Qwen Chat’s “Image Generation” mode, is user-friendly, allowing seamless prompt input and frame size selection. Performance is snappy, generating images in about 5 seconds, though high-resolution outputs (1536×1536) demand significant VRAM (40-44GB for FP16). Editing capabilities shine in scenarios like changing a night scene to a sunny morning while preserving details. However, infographic generation occasionally produces vague text placement, and realistic scenes may feel slightly disjointed. For developers and designers, the open-source nature and benchmark dominance make it a compelling choice, despite minor text integration issues in photorealistic outputs.

Look at some of the images generated by Qwen. Image Sources: https://qwenlm.github.io/blog/qwen-image/

Comparison with Competitors

| Feature | Qwen-Image | Midjourney v6 | Stable Diffusion 3 |

|---|---|---|---|

| Parameters | 20B (MMDiT) | Proprietary | ~8B (MMDiT) |

| Text Rendering | Multi-line, bilingual, high fidelity | Limited, often distorted | Improved but inconsistent |

| Image Editing | Style transfer, object add/remove | Basic editing, less precise | Advanced but less coherent |

| Benchmarks | SOTA on GenEval, LongText-Bench | Strong in art styles, weaker in text | Competitive, lags in text rendering |

| Resolution | Up to 1536×1536 | Up to 2048×2048 | Up to 1024×1024 |

| Accessibility | Open-source (Apache 2.0) | Subscription-based | Open-source (Apache 2.0) |

| Release Date | August 4, 2025 | December 2024 | June 2024 |

Analysis: Qwen-Image outperforms Midjourney in text rendering, especially for complex layouts, and its open-source nature contrasts with Midjourney’s paid model. Stable Diffusion 3 offers similar open-source benefits but lags in text accuracy and editing coherence. Qwen-Image’s 20B parameters enable superior performance, though it requires more computational power. Midjourney excels in artistic styles, while Qwen-Image’s editing precision and bilingual support make it ideal for professional use.

Use-Case Scenarios

- Graphic Designers Creating Marketing Materials: Qwen-Image’s text rendering excels for posters and infographics, like a movie poster with titles and subtitles perfectly integrated. Designers can specify fonts and layouts, saving hours of post-editing.

- E-commerce Teams Producing Product Visuals: Retailers benefit from generating product shots with legible labels, such as a coffee shop sign reading “Qwen Coffee $2 per cup.” The model’s editing tools allow quick adjustments, like changing backgrounds or adding logos.

- Educators Designing Classroom Content: Teachers can create engaging slides or infographics, like a wellness presentation with clear text and icons. Qwen-Image’s ability to handle multi-line text ensures professional, readable outputs for lectures or handouts.

Pricing, Availability & Variants

Qwen-Image is open-source under Apache 2.0, freely available via Hugging Face and ModelScope. Users can access it through Qwen Chat’s “Image Generation” mode or platforms like Novita AI ($0.02 per image). No pricing details apply for direct use, though high-end GPUs (40-44GB VRAM) are needed for FP16 inference. The model supports customizable resolutions (256×256 to 1536×1536) and aspect ratios (e.g., 1:1, 16:9). The editing version is slated for future release, with no confirmed date. Currently, it’s available globally for research and non-commercial use, with commercial applications supported via platforms like KontextFlux.io. No promotional bundles are offered, but community feedback is encouraged to shape future updates.

Expert Opinion / Verdict

Qwen-Image sets a new standard for AI image generation, blending state-of-the-art text rendering with robust editing capabilities. Its open-source model empowers developers, while its benchmark performance (e.g., 0.91 on GenEval) rivals proprietary giants like GPT-4o. The ability to handle complex layouts and bilingual text makes it a top pick for professional creators, though infographic clarity needs refinement. The high VRAM requirement may limit casual use, but its accessibility via Qwen Chat broadens its reach.

Ratings:

- Design: ★★★★☆ (Intuitive, but text integration needs polish)

- Performance: ★★★★★ (SOTA benchmarks, fast generation)

- Value: ★★★★★ (Free, open-source, high utility)

- Ecosystem Integration: ★★★★☆ (Strong framework support, editing version pending)

Recommendation: Ideal for designers, developers, and educators needing precise text rendering and editing. A must-try for open-source AI enthusiasts.

Conclusion & Call-to-Action

Qwen-Image is a bold step forward in AI-driven visual creation, offering unmatched text rendering and editing precision. Its open-source nature invites innovation, making it a vital tool for creators and researchers. Have you tried Qwen-Image yet? Share your experiences in the comments or explore our guide on maximizing AI image generation tools. Don’t forget to share this post with your network!

References

[1] https://qwenlm.github.io/blog/qwen-image/

[2] https://venturebeat.com/ai/qwen-image-is-a-powerful-open-source-new-ai-image-generator-with-support-for-embedded-text-in-english-chinese/

[3] https://arxiv.org/abs/2508.02324